This page was generated from examples/models/metadata/graph_metadata.ipynb.

Complex Graphs Metadata Example¶

Prerequisites¶

A kubernetes cluster with kubectl configured

curl

pygmentize

Setup Seldon Core¶

Use the setup notebook to Setup Cluster to setup Seldon Core with an ingress.

[1]:

!kubectl create namespace seldon

Error from server (AlreadyExists): namespaces "seldon" already exists

[2]:

!kubectl config set-context $(kubectl config current-context) --namespace=seldon

Context "kind-kind" modified.

Used model¶

In this example notebook we will use a dummy node that can serve multiple purposes in the graph.

The model will read its metadata from environmental variable (this is done automatically).

Actual logic that happens on each of this endpoint is not subject of this notebook.

We will only concentrate on graph-level metadata that orchestrator constructs from metadata reported by each node.

[3]:

%%writefile models/generic-node/Node.py

import logging

import random

import os

NUMBER_OF_ROUTES = int(os.environ.get("NUMBER_OF_ROUTES", "2"))

class Node:

def predict(self, features, names=[], meta=[]):

logging.info(f"model features: {features}")

logging.info(f"model names: {names}")

logging.info(f"model meta: {meta}")

return features.tolist()

def transform_input(self, features, names=[], meta=[]):

return self.predict(features, names, meta)

def transform_output(self, features, names=[], meta=[]):

return self.predict(features, names, meta)

def aggregate(self, features, names=[], meta=[]):

logging.info(f"model features: {features}")

logging.info(f"model names: {names}")

logging.info(f"model meta: {meta}")

return [x.tolist() for x in features]

def route(self, features, names=[], meta=[]):

logging.info(f"model features: {features}")

logging.info(f"model names: {names}")

logging.info(f"model meta: {meta}")

route = random.randint(0, NUMBER_OF_ROUTES)

logging.info(f"routing to: {route}")

return route

Overwriting models/generic-node/Node.py

Build image¶

build image using provided Makefile

cd models/generic-node

make build

If you are using kind you can use kind_image_install target to directly load your image into your local cluster.

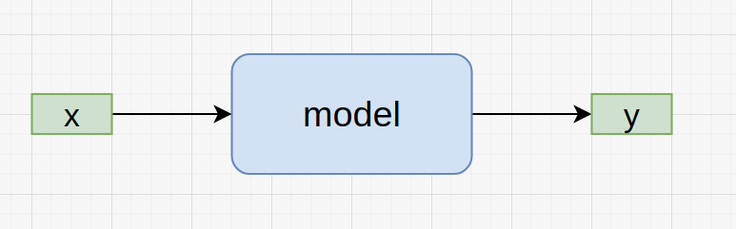

Single Model¶

In case of single-node graph model-level inputs and outputs, x and y, will simply be also the deployment-level graphinputs and graphoutputs.

[4]:

%%writefile graph-metadata/single.yaml

apiVersion: machinelearning.seldon.io/v1

kind: SeldonDeployment

metadata:

name: graph-metadata-single

spec:

name: test-deployment

predictors:

- componentSpecs:

- spec:

containers:

- image: seldonio/metadata-generic-node:0.4

name: model

env:

- name: MODEL_METADATA

value: |

---

name: single-node

versions: [ generic-node/v0.4 ]

platform: seldon

inputs:

- messagetype: tensor

schema:

names: [node-input]

shape: [ 1 ]

outputs:

- messagetype: tensor

schema:

names: [node-output]

shape: [ 1 ]

graph:

name: model

type: MODEL

children: []

name: example

replicas: 1

Overwriting graph-metadata/single.yaml

[5]:

!kubectl apply -f graph-metadata/single.yaml

seldondeployment.machinelearning.seldon.io/graph-metadata-single created

[7]:

!kubectl rollout status deploy/$(kubectl get deploy -l seldon-deployment-id=graph-metadata-single -o jsonpath='{.items[0].metadata.name}')

deployment "graph-metadata-single-example-0-model" successfully rolled out

Graph Level¶

Graph level metadata is available at the api/v1.0/metadata endpoint of your deployment:

[8]:

import time

import requests

def getWithRetry(url):

for i in range(3):

r = requests.get(url)

if r.status_code == requests.codes.ok:

meta = r.json()

return meta

else:

print("Failed request with status code ", r.status_code)

time.sleep(3)

[9]:

meta = getWithRetry(

"http://localhost:8003/seldon/seldon/graph-metadata-single/api/v1.0/metadata"

)

assert meta == {

"name": "example",

"models": {

"model": {

"name": "single-node",

"platform": "seldon",

"versions": ["generic-node/v0.4"],

"inputs": [

{

"messagetype": "tensor",

"schema": {"names": ["node-input"], "shape": [1]},

}

],

"outputs": [

{

"messagetype": "tensor",

"schema": {"names": ["node-output"], "shape": [1]},

}

],

}

},

"graphinputs": [

{"messagetype": "tensor", "schema": {"names": ["node-input"], "shape": [1]}}

],

"graphoutputs": [

{"messagetype": "tensor", "schema": {"names": ["node-output"], "shape": [1]}}

],

}

meta

[9]:

{'name': 'example',

'models': {'model': {'name': 'single-node',

'platform': 'seldon',

'versions': ['generic-node/v0.4'],

'inputs': [{'messagetype': 'tensor',

'schema': {'names': ['node-input'], 'shape': [1]}}],

'outputs': [{'messagetype': 'tensor',

'schema': {'names': ['node-output'], 'shape': [1]}}]}},

'graphinputs': [{'messagetype': 'tensor',

'schema': {'names': ['node-input'], 'shape': [1]}}],

'graphoutputs': [{'messagetype': 'tensor',

'schema': {'names': ['node-output'], 'shape': [1]}}]}

Model Level¶

Compare with model metadata available at the api/v1.0/metadata/model:

[10]:

import requests

meta = getWithRetry(

"http://localhost:8003/seldon/seldon/graph-metadata-single/api/v1.0/metadata/model"

)

assert meta == {

"custom": {},

"name": "single-node",

"platform": "seldon",

"versions": ["generic-node/v0.4"],

"inputs": [

{

"messagetype": "tensor",

"schema": {"names": ["node-input"], "shape": [1]},

}

],

"outputs": [

{

"messagetype": "tensor",

"schema": {"names": ["node-output"], "shape": [1]},

}

],

}

meta

[10]:

{'custom': {},

'inputs': [{'messagetype': 'tensor',

'schema': {'names': ['node-input'], 'shape': [1]}}],

'name': 'single-node',

'outputs': [{'messagetype': 'tensor',

'schema': {'names': ['node-output'], 'shape': [1]}}],

'platform': 'seldon',

'versions': ['generic-node/v0.4']}

[11]:

!kubectl delete -f graph-metadata/single.yaml

seldondeployment.machinelearning.seldon.io "graph-metadata-single" deleted

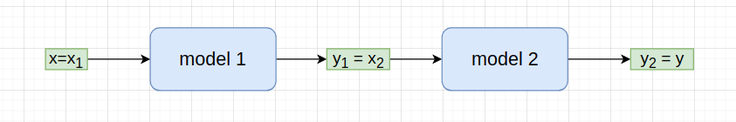

Two-Level Graph¶

In two-level graph graph output of the first model is input of the second model, x2=y1.

The graph-level input x will be first model’s input x1 and graph-level output y will be the last model’s output y2.

[17]:

%%writefile graph-metadata/two-levels.yaml

apiVersion: machinelearning.seldon.io/v1

kind: SeldonDeployment

metadata:

name: graph-metadata-two-levels

spec:

name: test-deployment

predictors:

- componentSpecs:

- spec:

containers:

- image: seldonio/metadata-generic-node:0.4

name: node-one

env:

- name: MODEL_METADATA

value: |

---

name: node-one

versions: [ generic-node/v0.4 ]

platform: seldon

inputs:

- messagetype: tensor

schema:

names: [ a1, a2 ]

shape: [ 2 ]

outputs:

- messagetype: tensor

schema:

names: [ a3 ]

shape: [ 1 ]

- image: seldonio/metadata-generic-node:0.4

name: node-two

env:

- name: MODEL_METADATA

value: |

---

name: node-two

versions: [ generic-node/v0.4 ]

platform: seldon

inputs:

- messagetype: tensor

schema:

names: [ a3 ]

shape: [ 1 ]

outputs:

- messagetype: tensor

schema:

names: [b1, b2]

shape: [ 2 ]

graph:

name: node-one

type: MODEL

children:

- name: node-two

type: MODEL

children: []

name: example

replicas: 1

Overwriting graph-metadata/two-levels.yaml

[18]:

!kubectl apply -f graph-metadata/two-levels.yaml

seldondeployment.machinelearning.seldon.io/graph-metadata-two-levels created

[19]:

!kubectl rollout status deploy/$(kubectl get deploy -l seldon-deployment-id=graph-metadata-two-levels -o jsonpath='{.items[0].metadata.name}')

Waiting for deployment "graph-metadata-two-levels-example-0-node-one-node-two" rollout to finish: 0 of 1 updated replicas are available...

deployment "graph-metadata-two-levels-example-0-node-one-node-two" successfully rolled out

[20]:

import requests

meta = getWithRetry(

"http://localhost:8003/seldon/seldon/graph-metadata-two-levels/api/v1.0/metadata"

)

assert meta == {

"name": "example",

"models": {

"node-one": {

"name": "node-one",

"platform": "seldon",

"versions": ["generic-node/v0.4"],

"inputs": [

{

"messagetype": "tensor",

"schema": {"names": ["a1", "a2"], "shape": [2]},

}

],

"outputs": [

{"messagetype": "tensor", "schema": {"names": ["a3"], "shape": [1]}}

],

},

"node-two": {

"name": "node-two",

"platform": "seldon",

"versions": ["generic-node/v0.4"],

"inputs": [

{"messagetype": "tensor", "schema": {"names": ["a3"], "shape": [1]}}

],

"outputs": [

{

"messagetype": "tensor",

"schema": {"names": ["b1", "b2"], "shape": [2]},

}

],

},

},

"graphinputs": [

{"messagetype": "tensor", "schema": {"names": ["a1", "a2"], "shape": [2]}}

],

"graphoutputs": [

{"messagetype": "tensor", "schema": {"names": ["b1", "b2"], "shape": [2]}}

],

}

meta

[20]:

{'name': 'example',

'models': {'node-one': {'name': 'node-one',

'platform': 'seldon',

'versions': ['generic-node/v0.4'],

'inputs': [{'messagetype': 'tensor',

'schema': {'names': ['a1', 'a2'], 'shape': [2]}}],

'outputs': [{'messagetype': 'tensor',

'schema': {'names': ['a3'], 'shape': [1]}}]},

'node-two': {'name': 'node-two',

'platform': 'seldon',

'versions': ['generic-node/v0.4'],

'inputs': [{'messagetype': 'tensor',

'schema': {'names': ['a3'], 'shape': [1]}}],

'outputs': [{'messagetype': 'tensor',

'schema': {'names': ['b1', 'b2'], 'shape': [2]}}]}},

'graphinputs': [{'messagetype': 'tensor',

'schema': {'names': ['a1', 'a2'], 'shape': [2]}}],

'graphoutputs': [{'messagetype': 'tensor',

'schema': {'names': ['b1', 'b2'], 'shape': [2]}}]}

[38]:

!kubectl delete -f graph-metadata/two-levels.yaml

seldondeployment.machinelearning.seldon.io "graph-metadata-two-levels" deleted

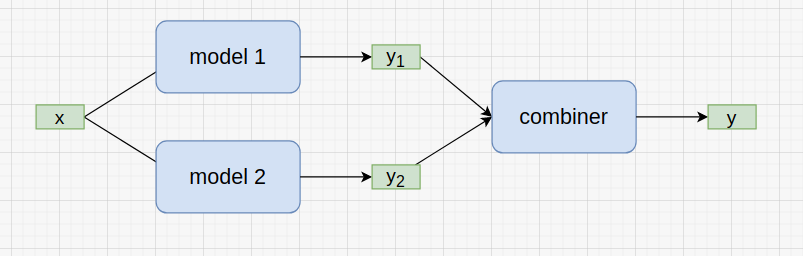

Combiner of two models¶

In graph with the combiner request is first passed to combiner’s children and before it gets aggregated by the combiner itself.

Input x is first passed to both models and their outputs y1 and y2 are passed to the combiner.

Combiner’s output y is the final output of the graph.

[21]:

%%writefile graph-metadata/combiner.yaml

apiVersion: machinelearning.seldon.io/v1

kind: SeldonDeployment

metadata:

name: graph-metadata-combiner

spec:

name: test-deployment

predictors:

- componentSpecs:

- spec:

containers:

- image: seldonio/metadata-generic-node:0.4

name: node-combiner

env:

- name: MODEL_METADATA

value: |

---

name: node-combiner

versions: [ generic-node/v0.4 ]

platform: seldon

inputs:

- messagetype: tensor

schema:

names: [ c1 ]

shape: [ 1 ]

- messagetype: tensor

schema:

names: [ c2 ]

shape: [ 1 ]

outputs:

- messagetype: tensor

schema:

names: [combiner-output]

shape: [ 1 ]

- image: seldonio/metadata-generic-node:0.4

name: node-one

env:

- name: MODEL_METADATA

value: |

---

name: node-one

versions: [ generic-node/v0.4 ]

platform: seldon

inputs:

- messagetype: tensor

schema:

names: [a, b]

shape: [ 2 ]

outputs:

- messagetype: tensor

schema:

names: [ c1 ]

shape: [ 1 ]

- image: seldonio/metadata-generic-node:0.4

name: node-two

env:

- name: MODEL_METADATA

value: |

---

name: node-two

versions: [ generic-node/v0.4 ]

platform: seldon

inputs:

- messagetype: tensor

schema:

names: [a, b]

shape: [ 2 ]

outputs:

- messagetype: tensor

schema:

names: [ c2 ]

shape: [ 1 ]

graph:

name: node-combiner

type: COMBINER

children:

- name: node-one

type: MODEL

children: []

- name: node-two

type: MODEL

children: []

name: example

replicas: 1

Overwriting graph-metadata/combiner.yaml

[22]:

!kubectl apply -f graph-metadata/combiner.yaml

seldondeployment.machinelearning.seldon.io/graph-metadata-combiner created

[23]:

!kubectl rollout status deploy/$(kubectl get deploy -l seldon-deployment-id=graph-metadata-combiner -o jsonpath='{.items[0].metadata.name}')

Waiting for deployment "seldon-f3fb28d397222d10648e6c1c7e0bea9e" rollout to finish: 0 of 1 updated replicas are available...

deployment "seldon-f3fb28d397222d10648e6c1c7e0bea9e" successfully rolled out

[24]:

import requests

meta = getWithRetry(

"http://localhost:8003/seldon/seldon/graph-metadata-combiner/api/v1.0/metadata"

)

assert meta == {

"name": "example",

"models": {

"node-combiner": {

"name": "node-combiner",

"platform": "seldon",

"versions": ["generic-node/v0.4"],

"inputs": [

{"messagetype": "tensor", "schema": {"names": ["c1"], "shape": [1]}},

{"messagetype": "tensor", "schema": {"names": ["c2"], "shape": [1]}},

],

"outputs": [

{

"messagetype": "tensor",

"schema": {"names": ["combiner-output"], "shape": [1]},

}

],

},

"node-one": {

"name": "node-one",

"platform": "seldon",

"versions": ["generic-node/v0.4"],

"inputs": [

{

"messagetype": "tensor",

"schema": {"names": ["a", "b"], "shape": [2]},

},

],

"outputs": [

{"messagetype": "tensor", "schema": {"names": ["c1"], "shape": [1]}}

],

},

"node-two": {

"name": "node-two",

"platform": "seldon",

"versions": ["generic-node/v0.4"],

"inputs": [

{

"messagetype": "tensor",

"schema": {"names": ["a", "b"], "shape": [2]},

},

],

"outputs": [

{"messagetype": "tensor", "schema": {"names": ["c2"], "shape": [1]}}

],

},

},

"graphinputs": [

{"messagetype": "tensor", "schema": {"names": ["a", "b"], "shape": [2]}},

],

"graphoutputs": [

{

"messagetype": "tensor",

"schema": {"names": ["combiner-output"], "shape": [1]},

}

],

}

meta

[24]:

{'name': 'example',

'models': {'node-combiner': {'name': 'node-combiner',

'platform': 'seldon',

'versions': ['generic-node/v0.4'],

'inputs': [{'messagetype': 'tensor',

'schema': {'names': ['c1'], 'shape': [1]}},

{'messagetype': 'tensor', 'schema': {'names': ['c2'], 'shape': [1]}}],

'outputs': [{'messagetype': 'tensor',

'schema': {'names': ['combiner-output'], 'shape': [1]}}]},

'node-one': {'name': 'node-one',

'platform': 'seldon',

'versions': ['generic-node/v0.4'],

'inputs': [{'messagetype': 'tensor',

'schema': {'names': ['a', 'b'], 'shape': [2]}}],

'outputs': [{'messagetype': 'tensor',

'schema': {'names': ['c1'], 'shape': [1]}}]},

'node-two': {'name': 'node-two',

'platform': 'seldon',

'versions': ['generic-node/v0.4'],

'inputs': [{'messagetype': 'tensor',

'schema': {'names': ['a', 'b'], 'shape': [2]}}],

'outputs': [{'messagetype': 'tensor',

'schema': {'names': ['c2'], 'shape': [1]}}]}},

'graphinputs': [{'messagetype': 'tensor',

'schema': {'names': ['a', 'b'], 'shape': [2]}}],

'graphoutputs': [{'messagetype': 'tensor',

'schema': {'names': ['combiner-output'], 'shape': [1]}}]}

[39]:

!kubectl delete -f graph-metadata/combiner.yaml

seldondeployment.machinelearning.seldon.io "graph-metadata-combiner" deleted

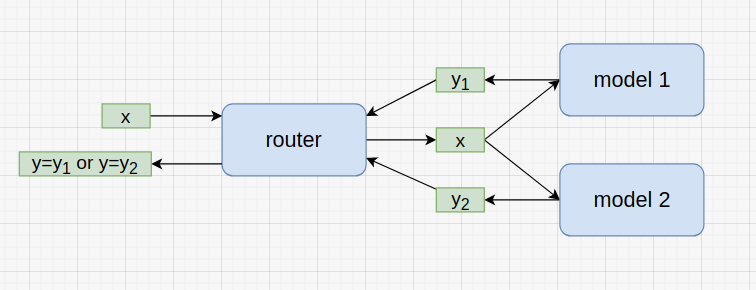

Router with two models¶

In this example request x is passed by router to one of its children.

Router then returns children output y1 or y2 as graph’s output y.

Here we assume that all children accepts similarly structured input and retun a similarly structured output.

[25]:

%%writefile graph-metadata/router.yaml

apiVersion: machinelearning.seldon.io/v1

kind: SeldonDeployment

metadata:

name: graph-metadata-router

spec:

name: test-deployment

predictors:

- componentSpecs:

- spec:

containers:

- image: seldonio/metadata-generic-node:0.4

name: node-router

- image: seldonio/metadata-generic-node:0.4

name: node-one

env:

- name: MODEL_METADATA

value: |

---

name: node-one

versions: [ generic-node/v0.4 ]

platform: seldon

inputs:

- messagetype: tensor

schema:

names: [ a, b ]

shape: [ 2 ]

outputs:

- messagetype: tensor

schema:

names: [ node-output ]

shape: [ 1 ]

- image: seldonio/metadata-generic-node:0.4

name: node-two

env:

- name: MODEL_METADATA

value: |

---

name: node-two

versions: [ generic-node/v0.4 ]

platform: seldon

inputs:

- messagetype: tensor

schema:

names: [ a, b ]

shape: [ 2 ]

outputs:

- messagetype: tensor

schema:

names: [ node-output ]

shape: [ 1 ]

graph:

name: node-router

type: ROUTER

children:

- name: node-one

type: MODEL

children: []

- name: node-two

type: MODEL

children: []

name: example

replicas: 1

Overwriting graph-metadata/router.yaml

[26]:

!kubectl apply -f graph-metadata/router.yaml

seldondeployment.machinelearning.seldon.io/graph-metadata-router created

[27]:

!kubectl rollout status deploy/$(kubectl get deploy -l seldon-deployment-id=graph-metadata-router -o jsonpath='{.items[0].metadata.name}')

Waiting for deployment "graph-metadata-router-example-0-node-router-node-one-node-two" rollout to finish: 0 of 1 updated replicas are available...

deployment "graph-metadata-router-example-0-node-router-node-one-node-two" successfully rolled out

[29]:

import requests

meta = getWithRetry(

"http://localhost:8003/seldon/seldon/graph-metadata-router/api/v1.0/metadata"

)

assert meta == {

"name": "example",

"models": {

"node-router": {

"name": "seldonio/metadata-generic-node",

"versions": ["0.4"],

"inputs": [],

"outputs": [],

},

"node-one": {

"name": "node-one",

"platform": "seldon",

"versions": ["generic-node/v0.4"],

"inputs": [

{"messagetype": "tensor", "schema": {"names": ["a", "b"], "shape": [2]}}

],

"outputs": [

{

"messagetype": "tensor",

"schema": {"names": ["node-output"], "shape": [1]},

}

],

},

"node-two": {

"name": "node-two",

"platform": "seldon",

"versions": ["generic-node/v0.4"],

"inputs": [

{"messagetype": "tensor", "schema": {"names": ["a", "b"], "shape": [2]}}

],

"outputs": [

{

"messagetype": "tensor",

"schema": {"names": ["node-output"], "shape": [1]},

}

],

},

},

"graphinputs": [

{"messagetype": "tensor", "schema": {"names": ["a", "b"], "shape": [2]}}

],

"graphoutputs": [

{"messagetype": "tensor", "schema": {"names": ["node-output"], "shape": [1]}}

],

}

meta

[29]:

{'name': 'example',

'models': {'node-one': {'name': 'node-one',

'platform': 'seldon',

'versions': ['generic-node/v0.4'],

'inputs': [{'messagetype': 'tensor',

'schema': {'names': ['a', 'b'], 'shape': [2]}}],

'outputs': [{'messagetype': 'tensor',

'schema': {'names': ['node-output'], 'shape': [1]}}]},

'node-router': {'name': 'seldonio/metadata-generic-node',

'versions': ['0.4'],

'inputs': [],

'outputs': []},

'node-two': {'name': 'node-two',

'platform': 'seldon',

'versions': ['generic-node/v0.4'],

'inputs': [{'messagetype': 'tensor',

'schema': {'names': ['a', 'b'], 'shape': [2]}}],

'outputs': [{'messagetype': 'tensor',

'schema': {'names': ['node-output'], 'shape': [1]}}]}},

'graphinputs': [{'messagetype': 'tensor',

'schema': {'names': ['a', 'b'], 'shape': [2]}}],

'graphoutputs': [{'messagetype': 'tensor',

'schema': {'names': ['node-output'], 'shape': [1]}}]}

[40]:

!kubectl delete -f graph-metadata/router.yaml

seldondeployment.machinelearning.seldon.io "graph-metadata-router" deleted

Input Transformer¶

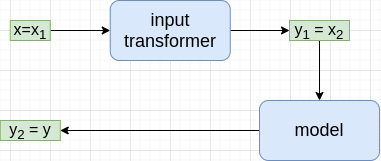

Input transformers work almost exactly the same as chained nodes, see two-level example above.

Following graph is presented in a way that is suppose to make next example (output transfomer) more intuitive.

[30]:

%%writefile graph-metadata/input-transformer.yaml

apiVersion: machinelearning.seldon.io/v1

kind: SeldonDeployment

metadata:

name: graph-metadata-input

spec:

name: test-deployment

predictors:

- componentSpecs:

- spec:

containers:

- image: seldonio/metadata-generic-node:0.4

name: node-input-transformer

env:

- name: MODEL_METADATA

value: |

---

name: node-input-transformer

versions: [ generic-node/v0.4 ]

platform: seldon

inputs:

- messagetype: tensor

schema:

names: [transformer-input]

shape: [ 1 ]

outputs:

- messagetype: tensor

schema:

names: [transformer-output]

shape: [ 1 ]

- image: seldonio/metadata-generic-node:0.4

name: node

env:

- name: MODEL_METADATA

value: |

---

name: node

versions: [ generic-node/v0.4 ]

platform: seldon

inputs:

- messagetype: tensor

schema:

names: [transformer-output]

shape: [ 1 ]

outputs:

- messagetype: tensor

schema:

names: [node-output]

shape: [ 1 ]

graph:

name: node-input-transformer

type: TRANSFORMER

children:

- name: node

type: MODEL

children: []

name: example

replicas: 1

Overwriting graph-metadata/input-transformer.yaml

[31]:

!kubectl apply -f graph-metadata/input-transformer.yaml

seldondeployment.machinelearning.seldon.io/graph-metadata-input created

[32]:

!kubectl rollout status deploy/$(kubectl get deploy -l seldon-deployment-id=graph-metadata-input -o jsonpath='{.items[0].metadata.name}')

Waiting for deployment "graph-metadata-input-example-0-node-input-transformer-node" rollout to finish: 0 of 1 updated replicas are available...

deployment "graph-metadata-input-example-0-node-input-transformer-node" successfully rolled out

[33]:

import requests

meta = getWithRetry(

"http://localhost:8003/seldon/seldon/graph-metadata-input/api/v1.0/metadata"

)

assert meta == {

"name": "example",

"models": {

"node-input-transformer": {

"name": "node-input-transformer",

"platform": "seldon",

"versions": ["generic-node/v0.4"],

"inputs": [

{

"messagetype": "tensor",

"schema": {"names": ["transformer-input"], "shape": [1]},

}

],

"outputs": [

{

"messagetype": "tensor",

"schema": {"names": ["transformer-output"], "shape": [1]},

}

],

},

"node": {

"name": "node",

"platform": "seldon",

"versions": ["generic-node/v0.4"],

"inputs": [

{

"messagetype": "tensor",

"schema": {"names": ["transformer-output"], "shape": [1]},

}

],

"outputs": [

{

"messagetype": "tensor",

"schema": {"names": ["node-output"], "shape": [1]},

}

],

},

},

"graphinputs": [

{

"messagetype": "tensor",

"schema": {"names": ["transformer-input"], "shape": [1]},

}

],

"graphoutputs": [

{"messagetype": "tensor", "schema": {"names": ["node-output"], "shape": [1]}}

],

}

meta

[33]:

{'name': 'example',

'models': {'node': {'name': 'node',

'platform': 'seldon',

'versions': ['generic-node/v0.4'],

'inputs': [{'messagetype': 'tensor',

'schema': {'names': ['transformer-output'], 'shape': [1]}}],

'outputs': [{'messagetype': 'tensor',

'schema': {'names': ['node-output'], 'shape': [1]}}]},

'node-input-transformer': {'name': 'node-input-transformer',

'platform': 'seldon',

'versions': ['generic-node/v0.4'],

'inputs': [{'messagetype': 'tensor',

'schema': {'names': ['transformer-input'], 'shape': [1]}}],

'outputs': [{'messagetype': 'tensor',

'schema': {'names': ['transformer-output'], 'shape': [1]}}]}},

'graphinputs': [{'messagetype': 'tensor',

'schema': {'names': ['transformer-input'], 'shape': [1]}}],

'graphoutputs': [{'messagetype': 'tensor',

'schema': {'names': ['node-output'], 'shape': [1]}}]}

[41]:

!kubectl delete -f graph-metadata/input-transformer.yaml

seldondeployment.machinelearning.seldon.io "graph-metadata-input" deleted

Output Transformer¶

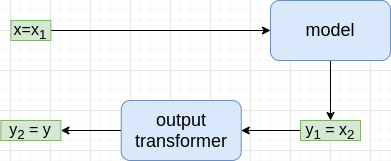

Output transformers work almost exactly opposite as chained nodes in the two-level example above.

Input x is first passed to the model that is child of the output-transformer before it is passed to it.

[34]:

%%writefile graph-metadata/output-transformer.yaml

apiVersion: machinelearning.seldon.io/v1

kind: SeldonDeployment

metadata:

name: graph-metadata-output

spec:

name: test-deployment

predictors:

- componentSpecs:

- spec:

containers:

- image: seldonio/metadata-generic-node:0.4

name: node-output-transformer

env:

- name: MODEL_METADATA

value: |

---

name: node-output-transformer

versions: [ generic-node/v0.4 ]

platform: seldon

inputs:

- messagetype: tensor

schema:

names: [transformer-input]

shape: [ 1 ]

outputs:

- messagetype: tensor

schema:

names: [transformer-output]

shape: [ 1 ]

- image: seldonio/metadata-generic-node:0.4

name: node

env:

- name: MODEL_METADATA

value: |

---

name: node

versions: [ generic-node/v0.4 ]

platform: seldon

inputs:

- messagetype: tensor

schema:

names: [node-input]

shape: [ 1 ]

outputs:

- messagetype: tensor

schema:

names: [transformer-input]

shape: [ 1 ]

graph:

name: node-output-transformer

type: OUTPUT_TRANSFORMER

children:

- name: node

type: MODEL

children: []

name: example

replicas: 1

Overwriting graph-metadata/output-transformer.yaml

[42]:

!kubectl apply -f graph-metadata/output-transformer.yaml

seldondeployment.machinelearning.seldon.io/graph-metadata-output configured

[43]:

!kubectl rollout status deploy/$(kubectl get deploy -l seldon-deployment-id=graph-metadata-output -o jsonpath='{.items[0].metadata.name}')

deployment "graph-metadata-output-example-0-node-output-transformer-node" successfully rolled out

[44]:

import requests

meta = getWithRetry(

"http://localhost:8003/seldon/seldon/graph-metadata-output/api/v1.0/metadata"

)

assert meta == {

"name": "example",

"models": {

"node-output-transformer": {

"name": "node-output-transformer",

"platform": "seldon",

"versions": ["generic-node/v0.4"],

"inputs": [

{

"messagetype": "tensor",

"schema": {"names": ["transformer-input"], "shape": [1]},

}

],

"outputs": [

{

"messagetype": "tensor",

"schema": {"names": ["transformer-output"], "shape": [1]},

}

],

},

"node": {

"name": "node",

"platform": "seldon",

"versions": ["generic-node/v0.4"],

"inputs": [

{

"messagetype": "tensor",

"schema": {"names": ["node-input"], "shape": [1]},

}

],

"outputs": [

{

"messagetype": "tensor",

"schema": {"names": ["transformer-input"], "shape": [1]},

}

],

},

},

"graphinputs": [

{"messagetype": "tensor", "schema": {"names": ["node-input"], "shape": [1]}}

],

"graphoutputs": [

{

"messagetype": "tensor",

"schema": {"names": ["transformer-output"], "shape": [1]},

}

],

}

meta

[44]:

{'name': 'example',

'models': {'node': {'name': 'node',

'platform': 'seldon',

'versions': ['generic-node/v0.4'],

'inputs': [{'messagetype': 'tensor',

'schema': {'names': ['node-input'], 'shape': [1]}}],

'outputs': [{'messagetype': 'tensor',

'schema': {'names': ['transformer-input'], 'shape': [1]}}]},

'node-output-transformer': {'name': 'node-output-transformer',

'platform': 'seldon',

'versions': ['generic-node/v0.4'],

'inputs': [{'messagetype': 'tensor',

'schema': {'names': ['transformer-input'], 'shape': [1]}}],

'outputs': [{'messagetype': 'tensor',

'schema': {'names': ['transformer-output'], 'shape': [1]}}]}},

'graphinputs': [{'messagetype': 'tensor',

'schema': {'names': ['node-input'], 'shape': [1]}}],

'graphoutputs': [{'messagetype': 'tensor',

'schema': {'names': ['transformer-output'], 'shape': [1]}}]}

[45]:

!kubectl delete -f graph-metadata/output-transformer.yaml

seldondeployment.machinelearning.seldon.io "graph-metadata-output" deleted